OpenAI just launched a research competition that doubles as a hiring pipeline — and it comes with $1 million in free compute credits. The OpenAI Model Craft Challenge is a new technical competition series designed to test how well you can build AI models under extreme constraints. The first event, called Parameter Golf, went live on March 18, 2026, and runs through April 30, 2026.

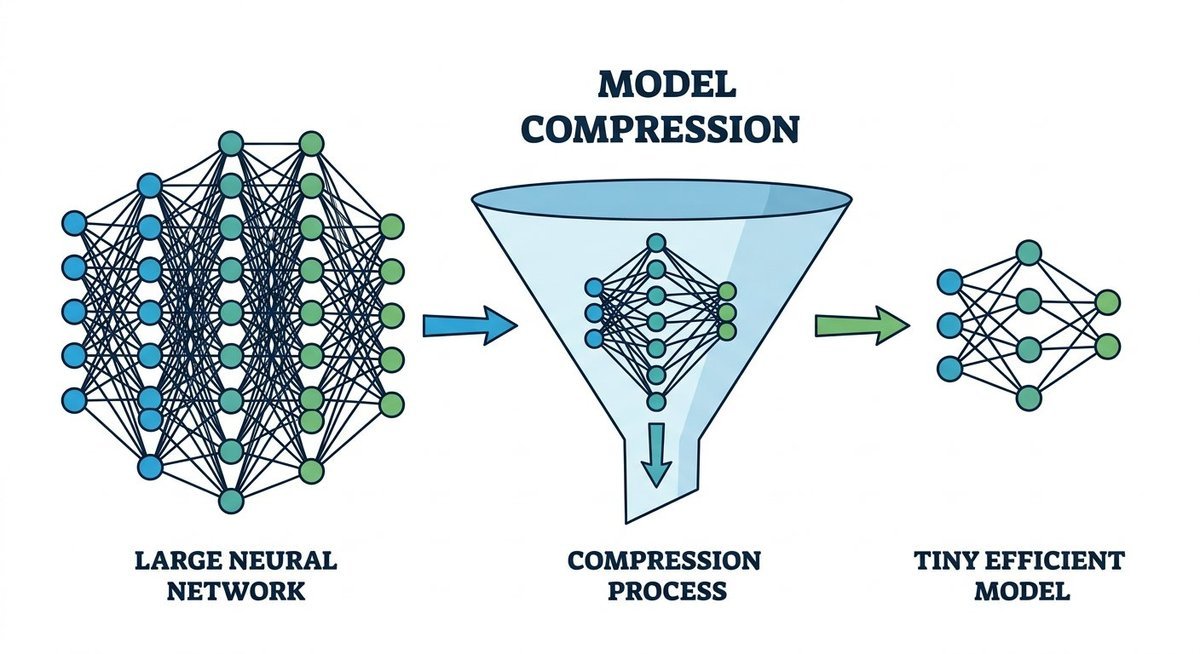

The premise is deceptively simple: train the strongest language model you can while keeping the total artifact — weights plus training code — under 16 megabytes. You get 10 minutes on eight H100 GPUs. That’s it. The best compression wins.

This guide breaks down the challenge rules, eligibility requirements, how submissions work, the RunPod partnership backing it, and the real reason OpenAI built this: to find and recruit exceptional AI talent outside traditional channels.

What Is Parameter Golf? Inside OpenAI’s First Model Craft Challenge

Parameter Golf is a model efficiency competition where participants train a language model that fits within a strict 16MB size limit and achieves the lowest possible score on a standardized benchmark. OpenAI designed it to mirror the exact kind of problem their pre-training researchers tackle daily — squeezing maximum performance from minimal resources.

The 16MB and 10-Minute Constraints

Two hard constraints define every submission. First, your total artifact size — model weights plus all training code combined — cannot exceed 16,000,000 bytes. That’s 16MB in decimal, not 16 MiB (16,777,216 bytes), so plan accordingly. Second, your model must train to completion within 10 minutes on a cluster of eight NVIDIA H100 GPUs.

The artifact must be entirely self-contained. No external downloads, no network calls during evaluation. Everything the model needs to run must ship inside that 16MB package.

How Submissions Are Scored: Bits Per Byte (bpb)

Performance is measured using bits per byte (bpb) on a fixed FineWeb validation set consisting of the first 50,000 documents. This metric is tokenizer-agnostic — it doesn’t matter what vocabulary or tokenization scheme you use. Lower scores win.

The current SOTA baseline, posted on March 18, 2026, sits at 1.2244 bpb using a 9-layer architecture with 512 dimensions, 1024 vocabulary tokens, tied embeddings, and 4 KV heads. A non-record run by Will DePue achieved 1.2074 bpb using a 4-hour training window — proof that beating the constrained baseline is possible with the right approach.

| Constraint | Specification | Key Detail |

|---|---|---|

| Artifact size | 16,000,000 bytes max | Includes weights + code, decimal not MiB |

| Training time | 10 minutes | 8x NVIDIA H100 GPUs |

| Evaluation dataset | FineWeb validation set | First 50,000 documents, fixed |

| Scoring metric | Bits per byte (bpb) | Tokenizer-agnostic, lower wins |

| Self-contained | Required | No external downloads during evaluation |

How to Enter: Submission Process Step by Step

Every submission flows through GitHub. You fork the official parameter-golf repository, build your model, and submit a pull request. There’s no registration form gating access — the repo is public and open to anyone who meets the eligibility criteria.

What Your Pull Request Must Include

A valid submission requires four components:

- README.md — A short write-up describing your approach, architecture decisions, and any novel techniques used

- submission.json — Metadata file with your model configuration and claimed score

- Training logs — Full logs proving your model trained within the 10-minute, 8xH100 compute budget

- train_gpt.py — A working, executable training script that reproduces your results

All submitted code must be open source under the MIT license and hosted in a public personal GitHub repository. OpenAI reviews each PR for reproducibility before it appears on the leaderboard.

Record Submissions vs. Non-Record Submissions

The leaderboard distinguishes two categories. Record submissions must beat the existing SOTA by at least 0.005 nats with statistical significance (p < 0.01), train within the 10-minute window, and prove correct val_bpb calculation. Non-record submissions are welcome for experimental or novel approaches that exceed the compute limit — OpenAI explicitly encourages these to foster exploration, even if they don’t land on the official board.

Eligibility Requirements and Official Rules

The barrier to entry is deliberately low. OpenAI wants broad participation — the challenge is designed so that a talented undergraduate with a laptop can compete against well-resourced research labs on equal footing.

Who Can Participate

You must be at least 18 years old and a legal resident of a country not subject to U.S. trade sanctions. A valid GitHub account and email address are the only technical prerequisites. Completing OpenAI’s participant interest form is encouraged — it helps them contact you about potential opportunities — but not required to submit.

Licensing and Intellectual Property

Every submission must be released under the MIT license in a public GitHub repository. Participants must comply with GitHub’s Terms of Service and all applicable laws. There is no transfer of IP to OpenAI — your work stays yours, but the open-source requirement means anyone can study, fork, and build on it.

$1 Million in Compute Credits: The RunPod Partnership

RunPod is the official infrastructure partner for the Model Craft Challenge series. Together with OpenAI, they are distributing up to $1,000,000 in compute credits throughout the challenge period to lower the hardware barrier for participants.

How to Get Free Compute Credits

Eligible participants can request credits through a form on the OpenAI Parameter Golf page. Availability is limited and distributed on a rolling basis throughout the competition window. Once approved, credits can be redeemed on RunPod’s platform with access to H100, H200, and P2-series GPUs.

The Pre-Configured RunPod Template

RunPod provides an official challenge template with a preloaded Docker image and the parameter-golf repository already configured. You can go from credit redemption to running experiments in minutes — no environment setup required.

As Zach Gulsby, RunPod’s Partnerships Lead, put it: “Parameter Golf is exactly the kind of technically demanding, open competition the builder community thrives on.”

For those without GPU access or credits, the repository also includes an Apple Silicon MLX training script for local development on Mac hardware. You can prototype and iterate locally before deploying to H100s for your final submission run.

Winning Strategies: Techniques Worth Exploring

The GitHub repository lists several research directions that OpenAI considers promising. These aren’t prescriptive — the whole point is to surprise the judges with something novel — but they signal where the organizers believe breakthroughs are possible.

Architecture and Training Innovations

- Depth recurrence — Reuse layers multiple times during forward passes to get more effective depth from fewer parameters

- Aggressive parameter tying — Share weights across components to reduce total parameter count without proportional performance loss

- Low-rank training — Constrain weight matrices to low-rank approximations that compress efficiently

Compression and Quantization

- Quantization-aware training (QAT) — Train with quantization in the loop so the model learns to perform well at reduced precision

- Int8 quantization + zlib compression — The baseline uses this combination; the “final_int8_zlib_roundtrip” metric in the training output shows your compressed size

- BitNets — Extreme low-bit-width architectures designed from the ground up for tiny model footprints

Tokenizer and Data Strategies

The evaluation is tokenizer-agnostic, which opens a powerful optimization axis. Reducing vocabulary size from the baseline 1024 tokens — or redesigning the tokenizer entirely — can free up bytes in your artifact budget for more model capacity. The baseline uses SentencePiece with 1024 tokens, but nothing stops you from experimenting with BPE variants, character-level approaches, or custom schemes.

| Strategy Category | Example Techniques | Potential Impact |

|---|---|---|

| Architecture | Depth recurrence, parameter tying, low-rank | More effective parameters per byte |

| Compression | QAT, int8+zlib, BitNets | Smaller artifact at same performance |

| Tokenization | Custom vocabularies, character-level models | Better bpb through encoding efficiency |

| Training efficiency | Megakernels, optimized CUDA, mixed precision | More training steps in 10-minute window |

The Real Endgame: OpenAI’s Talent Pipeline

Parameter Golf is a competition on the surface. Underneath, it’s a structured recruiting funnel. OpenAI has stated openly that standout participants will be invited for job interviews, and the company plans to hire a small cohort of early-career researchers in June 2026.

The target profile is specific: current undergraduate students, recent graduates, and competition medalists — people who may lack traditional ML credentials but demonstrate exceptional problem-solving instincts. Will DePue, who leads an OpenAI research team, built his group from former mathematicians, neuroscientists, and physicists. None had formal machine learning education. All had deep experience solving hard problems with unconventional approaches.

This hiring philosophy puts the Model Craft Challenge in a different category from typical industry competitions. The $1M in compute credits, the open-source requirement, and the GitHub-native submission process all reduce friction for exactly the kind of non-traditional talent OpenAI wants to find. If you’re an undergrad training models on a gaming laptop and can beat the 1.2244 bpb baseline, you have a legitimate path to an OpenAI research role.

The broader context matters too. AI talent competition is fierce — The Decoder reports that Meta has offered packages worth approximately $300 million to poach top researchers from OpenAI. Building a public, meritocratic competition is OpenAI’s answer to that arms race: find talent before they enter the traditional pipeline, prove their ability through open work, and recruit based on demonstrated skill rather than credentials.

Frequently Asked Questions

What exactly is the OpenAI Model Craft Challenge?

The OpenAI Model Craft Challenge is a technical competition series launched by OpenAI in March 2026. The first event, Parameter Golf, challenges participants to train the most efficient language model possible within a 16MB artifact limit and a 10-minute training window on 8x H100 GPUs. Performance is scored using bits per byte (bpb) on the FineWeb validation dataset.

Does it cost anything to participate?

No. The challenge is free to enter. OpenAI and RunPod are distributing up to $1 million in compute credits so participants can train models on H100, H200, and P2-series GPUs at no cost. You can also develop locally using the provided Apple Silicon MLX script.

When is the deadline for Parameter Golf?

Submissions close on April 30, 2026. The challenge opened on March 18, 2026. Submit your pull request to the official GitHub repository before the deadline.

Who is eligible to enter?

Anyone 18 or older who is a legal resident of a non-sanctioned country and has a valid GitHub account. No formal ML background is required. Both individuals and teams can submit, though each submission must come from a single GitHub repository and pull request.

Can this challenge lead to a job at OpenAI?

Yes. OpenAI has stated that standout participants may receive interview invitations. The company plans to hire a small cohort of early-career researchers in June 2026, specifically targeting undergraduates, recent graduates, and competition medalists.

What hardware do I need?

Official leaderboard submissions must reproduce on 8x H100 GPUs within 10 minutes. RunPod provides free compute credits and a pre-configured Docker template for this. For local development and prototyping, you can use an Apple Silicon Mac with the included MLX training script — then deploy to H100s for your final run.

What is bits per byte (bpb) and why does it matter?

Bits per byte measures how efficiently a language model compresses text. A lower bpb score means the model predicts text more accurately per byte of input. The metric is tokenizer-agnostic, so it creates a fair comparison regardless of vocabulary size or tokenization strategy. The current baseline is 1.2244 bpb.

What if my model doesn’t beat the baseline?

Submit anyway. OpenAI accepts non-record submissions that demonstrate novel architectures, interesting techniques, or creative approaches — even if they exceed the compute or size limits. These entries appear in a separate section and still demonstrate your capabilities to the recruitment team.