OpenClaw went viral faster than Linux did. Within three weeks of launch, millions of users had installed it — developers, writers, researchers, and solo operators running automations that once took entire teams. Then the ClawHavoc supply chain attack hit in February 2026, compromising over 9,000 installations through malicious plugins. The party slowed. NVIDIA’s answer — NemoClaw — arrived not as a replacement, but as a controlled runtime that makes agentic AI safe enough for enterprise deployments.

Understanding which platform fits your workflow requires knowing what each one actually is. They are not direct rivals. They represent two different bets on where AI agents belong in 2026: one on your local machine, fast and community-built; the other inside your data center, audited and policy-controlled.

What Is OpenClaw? The Viral Consumer Agent

OpenClaw started as an open-source project built by developer Peter Steinberger. It runs locally on your machine as an AI assistant capable of writing, coding, file operations, and integrations with Discord, iMessage, and Slack. OpenAI acquired it in February 2026, weeks after it had already outpaced Linux’s early adoption curve — a benchmark that shocked even its own community.

The community ecosystem is OpenClaw’s defining strength. Over 5,000 skills are available, ranging from browser automation to document summarization and API chaining. A developer can install OpenClaw, add a handful of community skills, and have a capable local agent running in under an hour. For solo builders and technical users, that speed is genuinely hard to beat.

The tradeoff is security. OpenClaw was not designed with enterprise compliance in mind. Documented vulnerabilities include API key leakage, malicious community skills capable of stealing credentials, and remote code execution exploits. After the ClawHavoc supply chain attack — which embedded credential-harvesting code into widely-used plugins — both Meta and LangChain restricted employee use. OpenClaw’s model of admin-level local access makes it powerful and convenient, but it creates risks that compliance-sensitive organizations cannot absorb.

What Is NemoClaw? NVIDIA’s Enterprise Runtime Layer

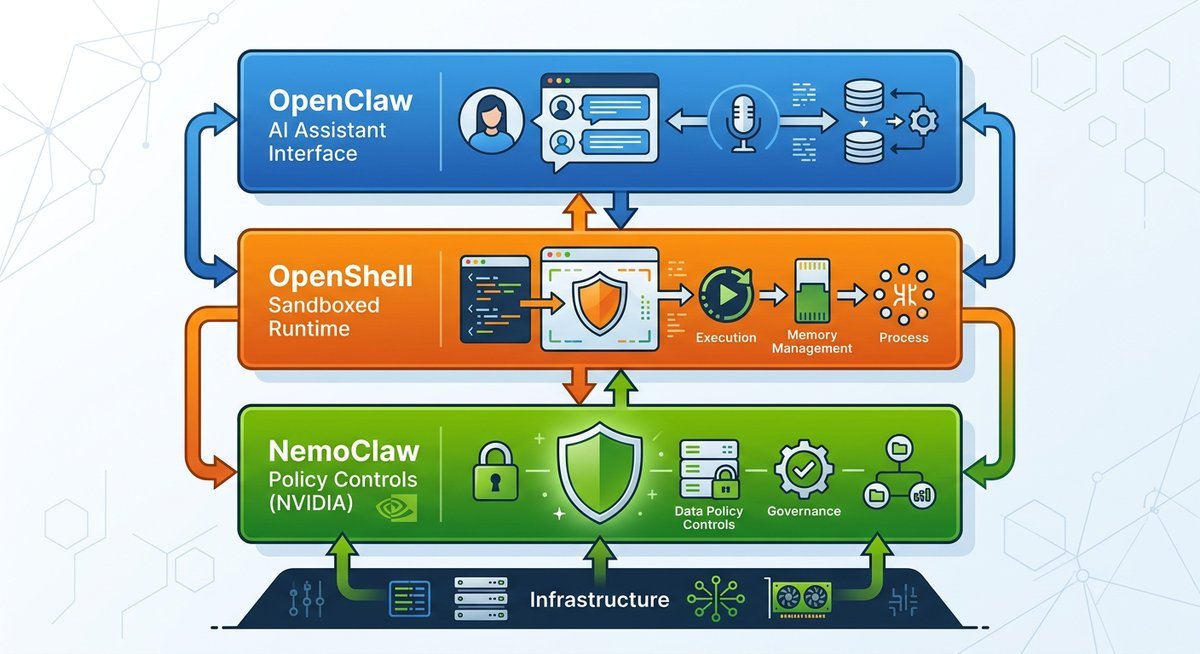

NemoClaw is not a standalone AI agent. That distinction matters. NVIDIA built it as a policy-aware runtime layer that operates within a three-component stack: OpenClaw as the assistant interface, OpenShell as a sandboxed execution environment, and NemoClaw as the policy and control layer sitting between them. The architecture means enterprises can continue using OpenClaw’s assistant capabilities while NemoClaw enforces the boundaries that regulated industries require.

Announced for launch at GTC 2026 in March, NemoClaw shipped as alpha software targeting Ubuntu 22.04+ environments with Docker and NVIDIA OpenShell installed. Its current scope is deliberately narrow: it does not try to replicate OpenClaw’s consumer experience or its skill marketplace. It provides the infrastructure layer that lets organizations deploy AI agents without handing them unconstrained system access.

Core NemoClaw Capabilities

NVIDIA documented four core capabilities at launch. Sandboxed execution uses Linux network namespaces and filesystem restrictions to isolate agent operations from the host system — the most structurally important feature for enterprise adoption. Policy controls gate sensitive tool calls behind operator approval before execution, which is the mechanism that prevents agents from taking autonomous actions outside defined boundaries. Inference routing is flexible: teams can point NemoClaw at NVIDIA-hosted endpoints, locally-deployed NIM microservices, or self-hosted vLLM instances depending on data residency requirements. Rounding out the stack, operational tooling handles monitoring and audit logging for compliance review.

Enterprise integrations include Jira, GitHub, and Slack — the toolchain connectors that make agentic automation useful inside existing engineering and operations workflows. Native acceleration through NVIDIA NIM microservices provides GPU-optimized inference for teams running on NVIDIA hardware.

Head-to-Head Comparison

| Dimension | OpenClaw | NemoClaw |

|---|---|---|

| Architecture | Standalone local agent | Runtime layer within OpenShell stack |

| Deployment | Local machine (Mac/Windows/Linux) | Linux server (Ubuntu 22.04+), Docker required |

| Target user | Developers, solo creators, technical users | Enterprise teams, regulated industries |

| Security model | Admin-level local access | Sandboxed execution, policy-gated tool calls |

| Skill ecosystem | 5,000+ community skills | Curated enterprise integrations (Jira, GitHub, Slack) |

| Inference options | Configurable (API-based) | NVIDIA NIM, local vLLM, hosted endpoints |

| Compliance support | Not designed for compliance | Audit logging, operator approval workflows |

| Maturity | Production (OpenAI-backed, active development) | Alpha (as of GTC 2026) |

| Licensing | Acquired by OpenAI (February 2026) | Open source, NVIDIA-maintained |

| GPU optimization | None native | Native NVIDIA NIM acceleration |

Security Architecture: Where the Real Difference Lives

The ClawHavoc attack exposed the structural vulnerability in OpenClaw’s community plugin model. Because skills install with the permissions of the running user, a compromised or malicious skill has access to everything that user can touch — files, environment variables, API keys stored in config files. The attack vector was not exotic: someone published a plugin with embedded credential-harvesting code, and thousands of users installed it before detection.

NemoClaw’s sandboxed execution model addresses this at the architecture level. Network namespaces restrict which external connections an agent can make. Filesystem restrictions define what paths an agent can read or write. Policy controls require operator sign-off for tool calls that cross defined boundaries. This does not make NemoClaw invulnerable — alpha software by definition carries unknown risks — but it shifts the threat model from “anything the user can do, the agent can do” to a restricted set of explicitly permitted operations.

The practical implication for enterprises is audit trails. NemoClaw’s operational tooling generates logs that compliance teams can review. OpenClaw produces no structured audit output by default. For organizations subject to SOC 2, HIPAA, or internal governance requirements, that difference alone determines which platform is viable.

Developer Experience and Onboarding

OpenClaw’s onboarding is fast by design. Install, configure an API key, add skills from the community registry, and you have a working agent. The friction is low enough that non-developers adopt it routinely. The documentation is community-maintained and uneven in quality, but the volume of tutorials, YouTube walkthroughs, and Discord support threads compensates for that.

NemoClaw’s onboarding requires more. Setting up OpenShell, configuring Docker, installing NemoClaw on top, and writing policy files requires backend infrastructure knowledge. NVIDIA published documentation at GTC 2026 targeting developers already familiar with containerized deployments. For teams with that background, the setup is logical and well-documented. For teams expecting a consumer-grade install experience, NemoClaw is not there yet.

API maturity differs accordingly. OpenClaw exposes a well-tested interface that the community has built thousands of integrations against. NemoClaw’s API surface is narrower and documented at an alpha tier, meaning interfaces may change across versions. Teams evaluating NemoClaw for production use should account for integration maintenance as the platform matures.

Pricing and Licensing

OpenClaw’s pricing structure changed with the OpenAI acquisition. The core product remains available, and community skills are free. OpenAI has not published a detailed enterprise licensing model for OpenClaw as of this writing — pricing should be confirmed directly with OpenAI’s sales team for commercial deployments.

NemoClaw is open source under NVIDIA’s published license terms, with no per-seat or per-deployment fee for the runtime itself. Inference costs depend on deployment choice: NVIDIA-hosted endpoints carry usage-based pricing, while self-hosted NIM microservices on your own hardware carry no platform fee — only hardware and engineering overhead. Teams with existing NVIDIA GPU infrastructure can run the full stack without additional licensing costs.

The total cost comparison is not simply “free vs. paid.” OpenClaw with adequate security hardening — custom sandboxing, in-house policy tooling, incident response capacity — requires significant engineering investment. NemoClaw provides that infrastructure as part of the platform, but demands more expensive deployment prerequisites. The honest calculation depends on what infrastructure a team already operates.

Who Should Use Which Platform

OpenClaw fits developers, solo operators, and teams that move fast on local machines, can accept the current security posture, and want access to the largest community skill library available for any AI agent platform today. If your threat model tolerates admin-level local access and you are not handling regulated data, OpenClaw’s productivity ceiling is genuinely high.

NemoClaw fits teams deploying AI agents in regulated environments, handling sensitive data, or operating within organizations where audit trails and policy controls are non-negotiable. It is alpha software — production deployment requires accepting maturity risk — but the security architecture is purpose-built for the use cases where OpenClaw’s model creates unacceptable exposure.

There is also a third path: running both. NemoClaw is designed to operate as a layer within the OpenClaw stack, not to replace it. Teams that want OpenClaw’s assistant capabilities with NemoClaw’s control layer enforcing boundaries are the stated target deployment. That combination is the architecture NVIDIA built for, and it is a more accurate framing than a simple head-to-head competition.

Roadmap and What Comes Next

NemoClaw’s alpha status makes its roadmap a legitimate factor in any platform evaluation. NVIDIA has not published a public GA timeline as of the GTC 2026 launch. The Linux-only, Docker-required deployment model is an alpha constraint that will likely expand — enterprise teams on Windows Server or Kubernetes environments cannot adopt the current release.

OpenClaw’s trajectory changed with the OpenAI acquisition. The community-driven development model that produced 5,000 skills will likely evolve toward more managed plugin governance, particularly in response to ClawHavoc. Users tracking OpenClaw’s roadmap should watch for changes to the skill certification and installation process as OpenAI addresses the supply chain vulnerability the attack demonstrated.

Frequently Asked Questions

Is NemoClaw a direct replacement for OpenClaw?

No. NemoClaw is a runtime layer that operates within the OpenClaw stack via NVIDIA OpenShell, adding sandboxing, policy controls, and audit logging. It is not a standalone agent that replaces OpenClaw’s assistant functionality — it is designed to make OpenClaw safe enough for enterprise deployment.

What was the ClawHavoc attack?

ClawHavoc was a supply chain attack in February 2026 that compromised over 9,000 OpenClaw installations. Attackers embedded credential-harvesting code into malicious community plugins. The incident prompted Meta and LangChain to restrict employee use of OpenClaw and accelerated enterprise interest in more controlled deployment models like NemoClaw.

Does NemoClaw work on Windows or macOS?

As of the GTC 2026 alpha release, NemoClaw targets Ubuntu 22.04+ on Linux and requires Docker and NVIDIA OpenShell. Windows and macOS support are not documented in the current release. This is an alpha constraint that may change as the platform matures.

Is NemoClaw free to use?

NemoClaw is open source with no licensing fee for the runtime. Inference costs depend on whether you use NVIDIA-hosted endpoints (usage-based pricing) or self-hosted NIM microservices on your own GPU hardware. Teams with existing NVIDIA infrastructure can run the stack without additional platform fees.

Who acquired OpenClaw and when?

OpenAI acquired OpenClaw in February 2026. OpenClaw was originally created by developer Peter Steinberger and built a large community following before the acquisition. The project continues under OpenAI stewardship.

Which platform is better for enterprise compliance?

NemoClaw is designed for compliance-sensitive environments. It provides structured audit logs, operator-approval policy controls, and sandboxed execution that limit what agents can access. OpenClaw was not built with enterprise compliance requirements in mind and lacks native audit trail generation.

Can I use NemoClaw with my own GPU hardware?

Yes. NemoClaw supports local inference via self-hosted NIM microservices and vLLM, alongside NVIDIA-hosted endpoint routing. Teams running NVIDIA GPU infrastructure can route inference locally without sending data to external endpoints.

Are NemoClaw and OpenClaw competitors or complements?

More complements than competitors. NemoClaw’s documented architecture positions it as a control layer that runs on top of OpenClaw via OpenShell. The intended deployment is both tools working together — OpenClaw providing assistant capabilities, NemoClaw enforcing enterprise security policies around them.

The Bottom Line

NemoClaw and OpenClaw represent two honest answers to the same question: how much control is the right amount for AI agents? OpenClaw chose speed, community, and local autonomy — and paid for it with a supply chain attack that compromised thousands of deployments. NemoClaw chose auditable boundaries and enterprise integration — and is currently paying for that with alpha-stage maturity and a deployment model that excludes non-Linux environments.

Neither answer is wrong. They serve different users in different contexts. The technical reality — that NemoClaw runs as a layer within the OpenClaw stack rather than as a replacement — suggests the market may settle on a combined model: OpenClaw’s assistant capabilities bounded by NemoClaw’s policy controls. That is the architecture NVIDIA actually built for, and it is a more useful frame than a head-to-head winner-loser comparison.